We often get the questions such as:

“how does it work?”

“which guard-rails are in place?”

“is this an ethical system?”…

⇓

Following is a description of the current workings of the

AI-Powered PMP® Exam Prep Simulator

The purpose behind the the AI-Powered PMP® Exam Prep Simulator is to create a learning experience which augments the experience of both the learners and of the trainers.

The AI-Powered PMP® Exam Prep Simulator is based on a multi-layered set of knowledge:

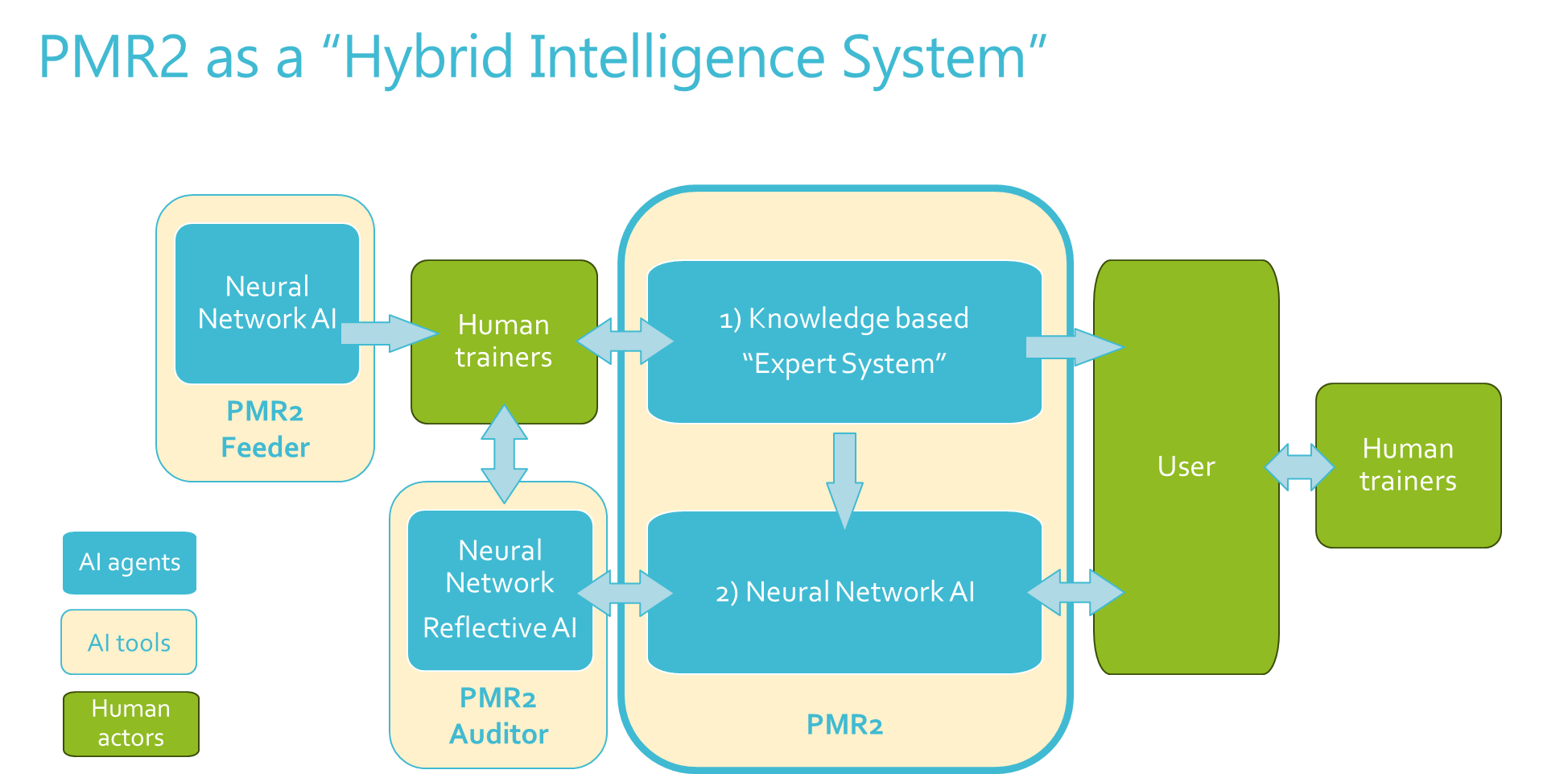

1) A first knowledge base, the “Expert System”: this system is built using AI (the “PMR2 Feeder”). All content which is generated has been both verified and integrated by human experts. This includes all the questions, the answers, the explanations and the Learning Cards.

2) The second layer is based on the OpenAI LLM. This is the conversation layer in which the user interacts with the LLM and may ask questions and learn new concepts from the AI. At this level as well however human trainer involvement is critical, since carefully crafted prompts guide the LLM in its answers to make sure the topics discussed are the relevant ones for the purposes of the AI-Powered PMP® Exam Prep Simulator (i.e. passing the PMP exam).

The co-working of both these layers (Expert System & LLM) are observed by a second Neural Network agent (the “PMR2 Auditor”) which is a role that we call the “AI reflective agent”. The “PMR2 Auditor” observes and recommends system improvements to the Human trainers. The “PMR2 Auditor” has the objective to identify potential biases, the confidence level of the explanations, mistakes, inaccuracies, repeated topics, missing topics, and in general it supports the human trainer in monitoring the quality of the system.

All improvements, those identified by the “PMR2 Auditor” as well as the other planned improvements, will be included into the the AI-Powered PMP® Exam Prep Simulator roadmap according to priorities defined by human trainers in collaboration with the users.

Note: The role of the AI reflective agent is focussed on making sure that human expertise stay relevant in the workings of the AI-Powered PMP® Exam Prep Simulator. This is important to identify if mistakes are made, and probably even more importantly if mistakes are not made, by making sure that project management experts keep themselves relevant!

——————–

Much of this setup is built following insights from Professor Catholijn Jonker, full professor of Interactive Intelligence at the Faculty of Electrical Engineering, Mathematics and Computer Science of the Delft University of Technology.

Mistakes are obviously all ours.